The Vercel Breach: How OAuth Became the Supply Chain

The Vercel Breach: How OAuth Became the Supply Chain

Breaches often happen because an attacker exploits a vulnerability, but that’s not what happened at Vercel. The Vercel breach happened because OAuth 2.0 did its job. The problem is that the job requirements have grown beyond what OAuth was designed for.

What happened in the Vercel breach

A Context.ai employee’s laptop was infected with Lumma Stealer malware in February 2026, per Hudson Rock’s analysis. The malware harvested credentials, including access to Context.ai’s AWS environment.

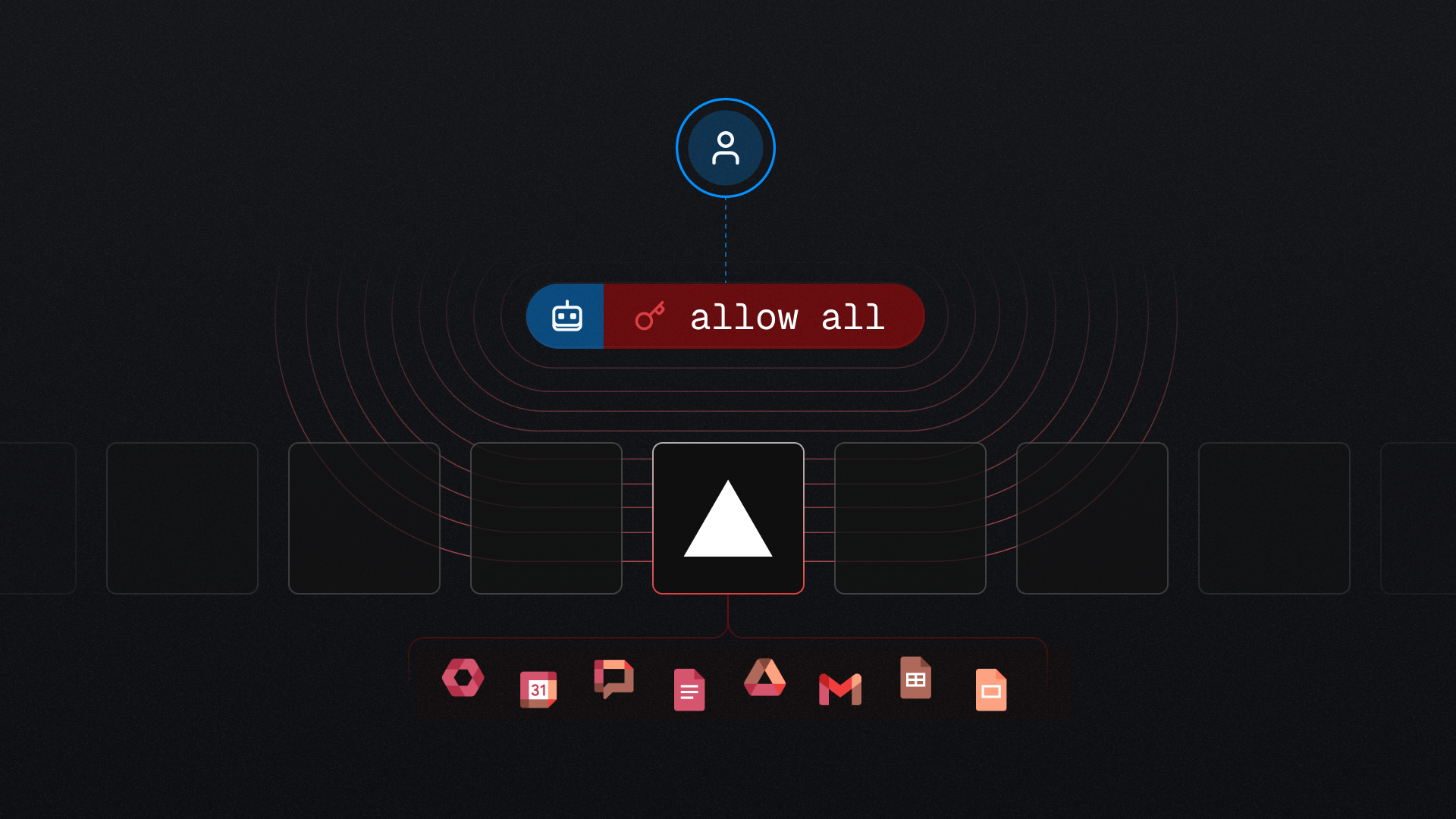

The attacker used that access to steal OAuth tokens belonging to users of Context.ai’s AI Office Suite. One of those tokens belonged to a Vercel employee who had signed up using their Vercel Google Workspace account and granted “allow all” permissions.

The attacker took over the employee’s Workspace account, used it to access Vercel, and enumerated environment variables that weren’t marked “sensitive”. Vercel CEO Guillermo Rauch described the attackers in a thread on X, sharing his suspicions that the attacker was “highly sophisticated” and “significantly accelerated by AI.”

OAuth worked exactly as designed

The root of the problem isn’t the underlying protocol. The protocol was OAuth 2.0, a delegated authorization specification that millions of services comply with. A user authorized a third-party application. The application received a bearer token reflecting that user’s access. Google Workspace honored the token.

Every step was compliant with OAuth 2.0.

When OAuth 2.0 was published in 2012, its goal was to stop third-party apps from storing user passwords. Implementers made scope and access token expiration decisions. OAuth 2.0 assumes the third party is a trusted application acting on the user’s behalf.

In 2026, the third party is a SaaS vendor holding tokens for hundreds of downstream organizations. And when that vendor is an AI product, the pressure on scope and lifetime increases. Scopes are broad because narrow scopes limit the usefulness of AI products. Lifetimes are long because vendors need persistent access to function.

Context.ai confirmed this. The permissions their AI Office Suite requested “were intended to grant AI agents the ability to perform Google Workspace actions such as writing emails or creating documents on the user’s behalf.” That’s a feature of their agentic product. The breach happened because the AI product was used as intended.

OAuth 2.0 enables persistent access with the refresh token: a long-lived credential the third party stores and exchanges for new access tokens without involving the user. A refresh token scoped to “allow all” mints fresh, fully-permissioned access tokens indefinitely, until someone revokes the original grant. That’s why Context.ai’s database was a master key.

OAuth supply chain attacks aren’t only an AI problem

This attack pattern predates AI. Compromise a SaaS vendor that holds OAuth tokens for its customers, then use those tokens to access customer environments. In 2025, the Salesloft Drift attack compromised Salesforce customers using stolen Drift tokens. Shortly after, the Gainsight breach followed the same pattern. Jaime Blasco of Nudge Security told Dark Reading that “OAuth tokens are the new attack surface.”

What AI changes is usefulness. A traditional integration could ask for one thing. An agent that does your work needs much more than that to be useful. Every AI productivity tool is designed to request the most permissive scope a user will tolerate, because narrow scopes limit the value of the product. That trade-off is becoming an unavoidable default. It needs a secure solution that doesn’t interfere with usefulness.

How OAuth token exchange (RFC 8693) solves excessive privilege

More OAuth hygiene won’t solve this, because the root of the problem is that long-lived bearer tokens with broad scopes are the wrong primitive for SaaS-to-SaaS and agent delegation. The solution is replacing those credentials with something designed for the frequent, task-specific access transactions agents have to make.

RFC 8693 OAuth 2.0 Token Exchange addresses this. Token exchange doesn’t rely on overly permissive, long-lived secrets. Instead, the client has a subject token that represents the user. When the client needs access to a third party, it uses the subject token to make a just-in-time (JIT) exchange with a Security Token Service (STS).

RFC 8693 defines the exchange mechanism. An STS like Keycard adds the policy layer. Governance policies evaluate permissions using this specific request’s context: the user, the agent acting on their behalf, the action, and the resource. The STS issues an ephemeral, task-scoped token only if policy conditions are satisfied.

What architecture could have prevented this?

With an STS like Keycard, the access pattern changes. A company configures Google as an identity provider in Keycard. When an employee wants to use a third-party agent like Context.ai, they sign in once and grant that agent permission to act on their behalf for specific resources. Keycard records the delegated grant scoped to the user, agent, and resources, and brokers credentials for the upstream resources the user authorized.

When the agent needs to send an email on the user’s behalf, it doesn’t hold a Google credential. It asks Keycard to issue one. Keycard evaluates access policy against the request. Is this agent allowed to impersonate this user for this resource, with this scope, in this context? Keycard denies impersonation by default. Policy must explicitly enable it and specify the agent, the user, and the access being granted.

The company registers Google Workspace as a resource in Keycard. Now Workspace access doesn’t flow through long-lived refresh tokens held in third-party databases. It flows through Keycard, governed by company policy. Policies can be specific: read-only on these document folders, write-with-human-approval on Gmail, deny admin actions, and restrict by request attributes.

This is fine-grained authorization, which a standard OAuth “allow all” grant can’t execute.

If policy permits, Keycard issues an ephemeral access token scoped for that resource and action. The token contains the user’s identity, the specific agent making the request, the session, and the resource it’s valid for. The agent uses the token to call Gmail and then discards it. The next agent action requires another exchange, another policy evaluation, and a fresh, task-scoped token.

Now say the same attack is used against an architecture protected by an STS.

The attacker compromises Context.ai and exfiltrates everything in their database. They get the agent’s identity. What they don’t get is a working token for Google Workspace, or any of the user’s resources. Policy is evaluated when credentials are issued, not when they’re used. The credentials don’t sit in Context.ai’s storage. They exist in Keycard, behind policy, issued one at a time.

To act on a user’s resources, the attacker has to come back through Keycard for every exchange and satisfy policy that evaluates context: who’s asking, from where, in what session, for what action. A stolen agent identity replayed from attacker infrastructure carries different actor claims than a legitimate exchange. This may include a different identity provider session, different identity claims, or different workload identity attestation. Policy can evaluate these attributes at exchange time and deny on a mismatch. The breach doesn’t propagate to customers protecting their resources behind an STS.

The takeaway: stop issuing credentials that outlive the task

OAuth gave Context.ai access to everything. It needed just-in-time access, evaluated in the context of each action, scoped to the task, and governed by policies that protect the company’s employees, data, and customers.

Vercel’s security bulletin recommends rotating non-sensitive variables, enabling MFA, and marking more things as sensitive. Those address the symptoms, but the structural fix is upstream: stop issuing overpermissioned, long-lived credentials.

The next breach following this pattern is coming. The only questions are: when? And which vendor?